From Silicon Valley to the UN, the question of how to blame when AI goes wrong is no longer a matter of esoteric regulation, but a matter of national importance.

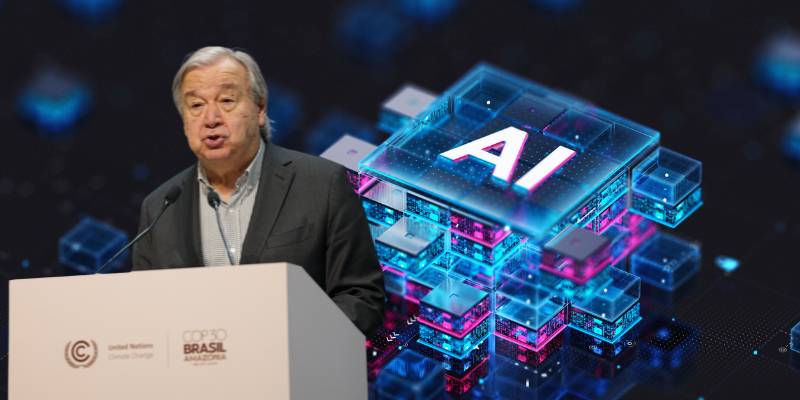

This week, the Secretary-General of the United Nations asked that question, highlighting a key issue in discussions about the ethics and regulation of AI. He asked who should be held responsible if AI systems cause harm, discrimination, or harm beyond human intent.

The comments were a clear warning to national leaders, as well as tech industry executives, that AI capabilities are outpacing regulations, as previously reported.

But it wasn’t just the warning that stood out. So is the tone. There was a feeling of resentment.

Even despair. If AI-powered machines are used to make decisions involving life and death, livelihoods, borders and safety, then someone will not just conclude that everything is too complicated.

The Secretary-General said responsibility “must be shared, between developers, deployers and administrators.”

The idea echoes long-standing suspicions at the UN about the unbridled power of technology, which has been rife in UN debates on digital governance and human rights.

That time is important. As governments try to draft AI laws at a time when technology is changing so rapidly, Europe is already leading the way in passing ambitious laws that will apply to the most dangerous AI products, establishing a level of control that could serve as a beacon – or a cautionary tale – for other countries.

But, honestly: the rules on the page won’t take away the power. The Secretary-General’s remarks go into the world against AIs currently being used in immigration screening, policing, creditworthiness, and military selection.

The public has been warning about the dangers of AI in the absence of accountability. It will be the perfect scapegoat for human decision-making with very human consequences: “the algorithm made me do it.”

We should also mention that there is an issue of geopolitics that has not been discussed yet: What will happen if the rules of AI interpretation in one country do not match those of a neighboring country?

What will happen if AI crosses the boundaries? Can we talk about AI deployment rights? Antonio Guterres, Secretary General of the UN, spoke about the need for global guidelines for the development and use of AI, as is done with nuclear and climate regulations.

And this is not an easy task in a world with the disintegration of international relations and international agreements, which are heading towards a state of complete withdrawal.

My explanation? This was not social media talk. This was a line drawing speech. It wasn’t a difficult message, even if it was a difficult problem to solve: AI isn’t exempt from accountability because it’s smarter or faster or more profitable.

There must be a business that is accountable for its results. And the more time the world spends deciding what that organization will be, the more painful and difficult the decision will be.