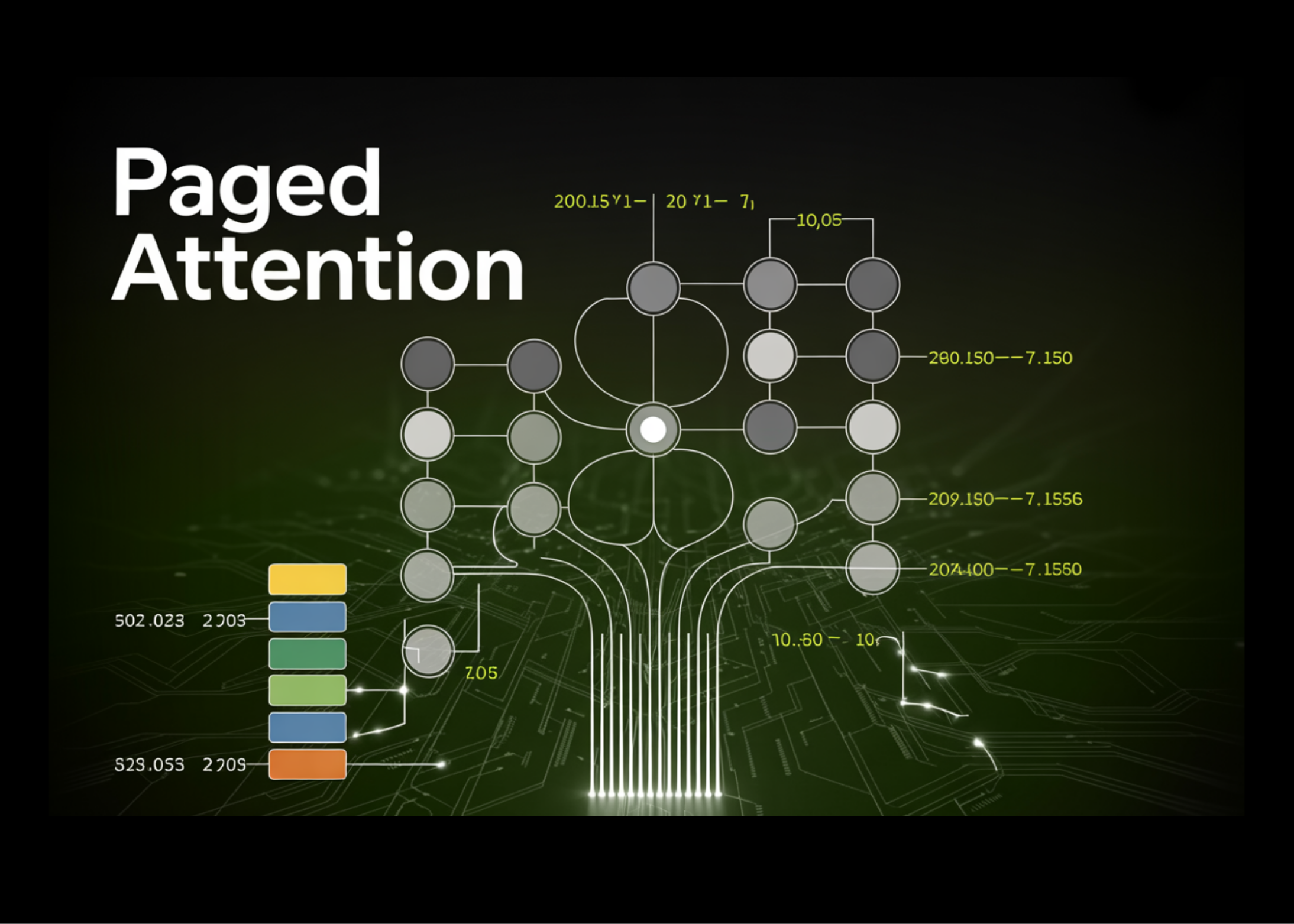

Paged Attention to Major Language Models LLMs

When using LLMs at scale, the real limitation is GPU memory rather than computation, mainly because each application needs a KV cache to store token-level data. In a typical setup, a large fixed memory block is reserved for each request based on the maximum sequence length, resulting in significant unused space and consistency limits. Paged … Read more