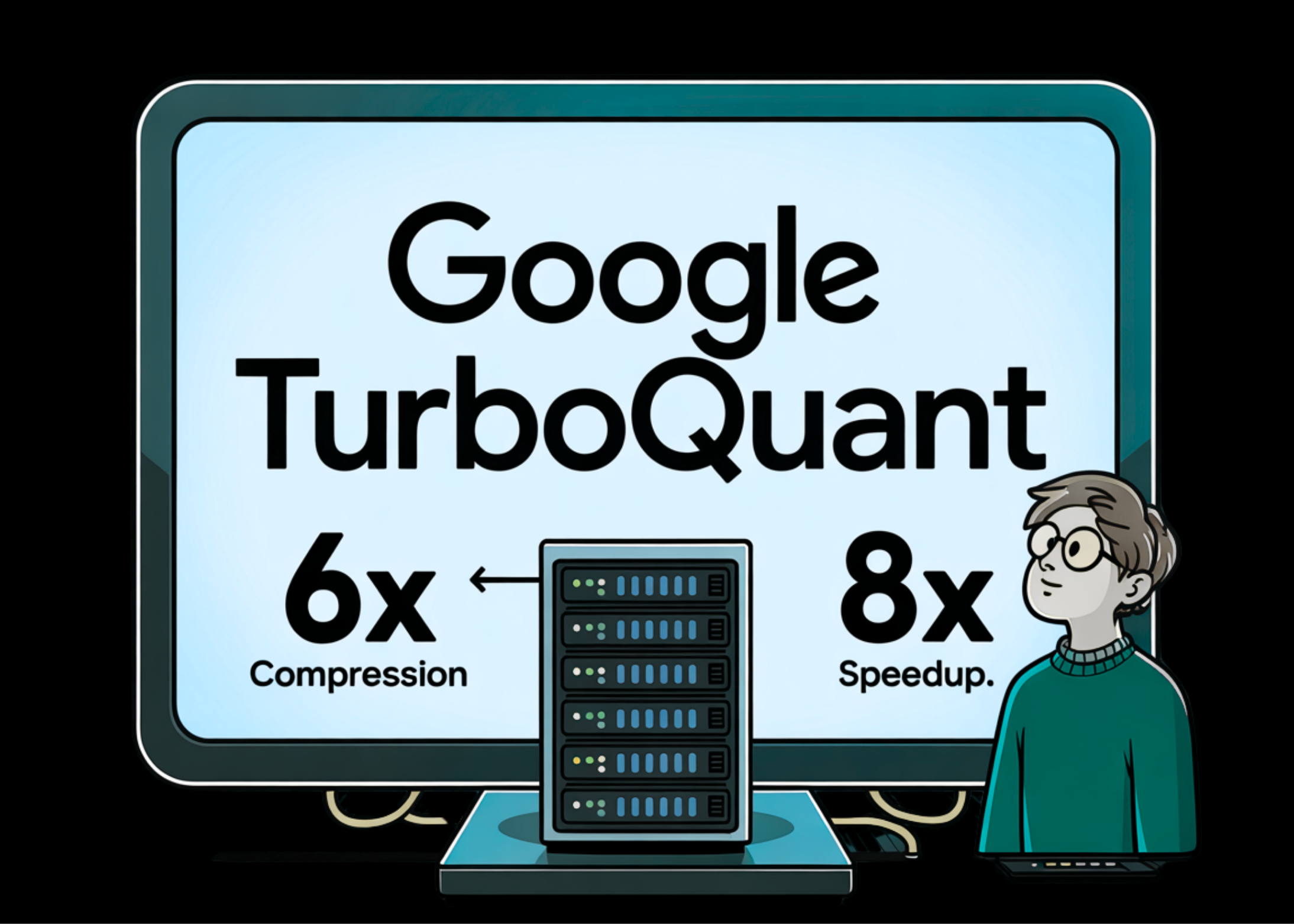

Google Introduces TurboQuant: A New Compression Algorithm That Reduces LLM Key Value Cache Memory by 6x and Delivers Up to 8x Speedup, All with Zero Loss of Accuracy

The scaling of large-scale language models (LLMs) is increasingly constrained by the memory interface between High-Bandwidth Memory (HBM) and SRAM. In particular, the Key-Value (KV) cache scales with model size and context length, creating a significant bottleneck for long content interpretation. Google’s research team made a proposal TurboQuanta data-insensitive estimation framework designed to achieve very … Read more