Video base models can paint a nice frame. They are still notorious for missing it. Push the camera down the hall in Wan 2.1 or CogVideoX and walls warp, objects morph, and details disappear — a giveaway that these models are suited to 2D pixel correlation rather than 3D scene simulation.

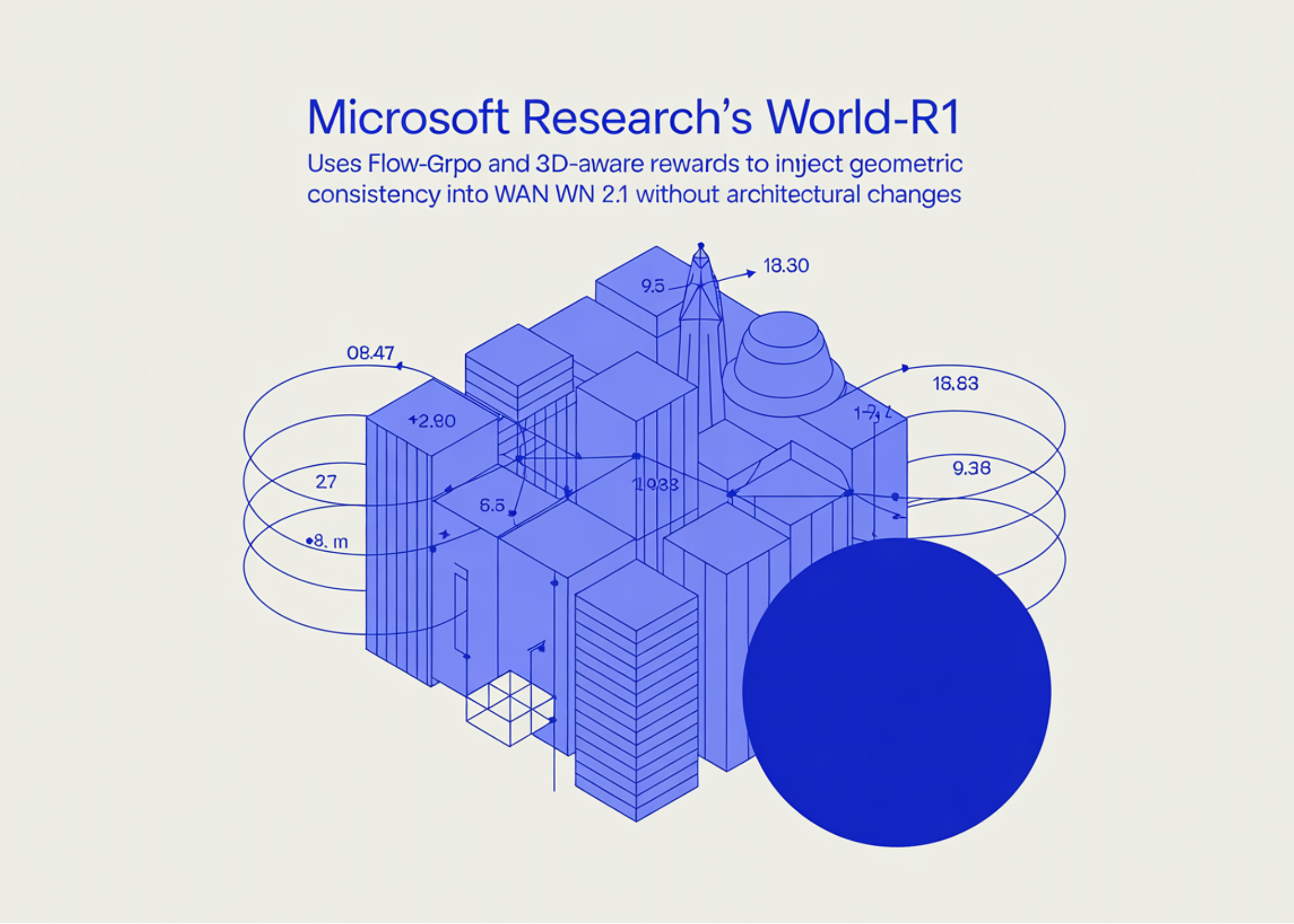

A team of researchers from Microsoft Research and Zhejiang University presented World-R1: a framework that aligns video production with 3D constraints through reinforcement learning. The research team relies on recent findings that video base models already have rich 3D geometry information embedded. The work is here wake up that hidden information instead of directing it with expensive 3D assets. World-R1 does this by post-training an existing text-to-video (T2V) model with reinforcement learning, using rewards derived from pre-trained 3D base models and a visual language critic. The basic structure is left untouched and the consideration costs are unchanged.

Two World-R1 exception thrown: Earth-R1-Minor (built on Wan2.1-T2V-1.3B) and World-R1-Great (built on Wan2.1-T2V-14B).

Setup: Flow-GRPO in a flow-compatible video model

Use of World-R1 Flow-GRPO-Fastrecent adaptation of GRPO to flow-like diffusion models. Flow-GRPO transforms the deterministic sample ODE into a backward-time SDE so that the policy is robust enough to estimate the profit, and then prepares the substituted GRPO with the standard KL as the reference policy. The fast variant includes only the SDE noise in randomly selected intermediate steps to reduce output costs.

Training runs at 832×480 resolution on 48 NVIDIA H200 GPUs for the Small model and 96 H200s for the Large model, with a GRPO group size of G=8 for all 48 parallel groups.

The reward of 3D recognition: analysis-by-synthesis

Interesting work is happening in the reward. For each generated video x, the system reconstructs a 3D Gaussian Splatting (3DGS) representation ΦGS using Depth Anything 3 and recovers the estimated camera trajectory Ê. The composite 3D award is:

R3D = Smeta +Srecon +Straj

- Smeta gives ΦGS from a meta-view – the offset of the camera from the generation trajectory – and it asks Qwen3-VL to score a reconstruction from 0–9 as a “3D vision expert,” penalizing floating, billboard artifacts, and stretching textures that look good but are bent on axis.

- Srecon it also assigns the incident by Ê and compares to x by 1 − LPIPS.

- Straj quantifies the deviation between the requested trajectory E and the received Ê using L2 translation and geodeic distance rotation, wrapped with a negative exponential.

A general term of beauty RGencounted as an explanation HPSv3 points in all the first K frames, added by λGen = 1 to keep the visual quality from falling under geometric pressure.

Blurry camera resolution with noise cancellation

Rather than training a CameraCtrl-style adapter, the World-R1 follows the go with the flow paradigm: information is divided into moving tokens (push_in, orbit_left, pull_outetc.), a sequence of camera outputs is generated, generated in a 2D optical flow under the projection of a fronto-parallel scene, and used to perform a different sound transport of the first latent. The transmitted noise preserves the unit variance with the regularization of the density tracker, so the prior distribution is not disturbed but the hidden one already encodes the requested route. No new parameters, no architecture changes.

A pure text data set, with occasional cuts to keep the animation alive

The training data is synthetic A Pure Text Data Set of about 3,000 notifications produced by Gemini, organized according to the WorldScore camera-trajectory taxonomy (intra-scene, inter-scene, composite, static) and in all areas of Nature, Urban & Architectural, Micro & Still Life, Fantasy & Surrealism, and Artistic Styles. Text-only navigation separates 3D learning from the visual selection of any particular video corpus.

Solid 3D renders have a known failure mode: the model skips solid scenes and stops producing dynamic content. World-R1 mitigates this by interval training. Every 100 steps, R3D is fixed and the model is fine-tuned in RGen alone by asking about 500 a subset of the data (waterfalls, crowds, fire, transforming things). To remove this section actually suggests rebuilding PSNR but dropping VBench AVG from 85.21 to 82.64 – which is exactly the degradation of hacking found by the research team.

Understanding the Effects

In the 3DGS-based reconstruction protocol, the World-R1-Large priority 27.67 PSNR / 0.865 SSIM / 0.162 LPIPSagainst 19.76 / 0.629 / 0.405 for Wan2.1-T2V-14B — a gain of 7.91 dB for PSNR. The World-R1-Small posts a 10.23 dB gain over its 1.3B backbone. On the reconstruction-independent Multi-View Consistency Score (MVCS) borrowed from GeoVideo, World-R1-Large reaches 0.993, ahead of all 3D-conditioned baselines and camera control cameras (Voyager, ViewCrafter, FlashWorld, ReCamMaster, etc.).

Camera control competes with special methods: RotErr 1.21, TransErr 1.30, CamMC 2.95 for the Large model, which edits CamCloneMaster and ReCamMaster despite not being a dedicated camera control architecture. VBench scores better than the base Wan 2.1 in Aesthetic Quality, Imaging Quality, Motion Smoothness, and Subject Consistency, with only a slight drop in Fixed Background.

Two empirical results stand out to AI experts. A dataset scaling The sweep shows monotonic gains from 1K → 2K → 3K information in both 3D and VBench AVG compatibility, which suggests that the recipe works very well on the data and can grow further. And while training is in short clips, World-R1-Large includes practice 121-frame generations, raising the PSNR from 18.32 to 26.32 over the Wan2.1-T2V-14B backbone. A double-blind study of 25 users reports win rates 92% for geometric consistency, 76% for camera control accuracy, and 86% for overall popularity against Wan 2.1.

Key Takeaways

- RL replaces architectural surgery in 3D consistency. World-R1 post-trains Wan2.1 with Flow-GRPO-Fast instead of binning in 3D modules or training on 3D supervised datasets. The cost of the basic buildings and the description have not changed.

- The reward is analysis-by-synthesis. Each generated video is projected onto a 3D Gaussian Splatting model with Depth Anything 3, and scored on three axes: meta-viewing feasibility (judged by Qwen3-VL), reconstruction reliability (1 − LPIPS), and trajectory alignment — combined with HPSv3 aesthetic reward to limit quality.

- The control of the camera comes from folding the sound, not from the new parameters. Motion tokens in the notification are converted into camera extrinsics, modeled for 2D optical flow, and used to warp the original latent with Go-with-the-Flow discrete audio transport. No CameraCtrl style adapter is required.

- Periodic decouple training prevents reward hijacking. Every 100 steps, the 3D reward is set up and the model is fine-tuned for the aesthetic reward alone with 500 variable inputs. Removing this section raises PSNR but VBench tanks — the model falls into static results, which rebuild easily.

- The numbers are large and hold outside the pipe. World-R1-Large achieves 7.91 dB PSNR over Wan2.1-T2V-14B, combines videos with 121 frames, and improves the independent MVCS metric — with an overall win rate of 86% in a study of 25 blind users.

Check it out Paper, Codes again Project Page. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? Connect with us

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.