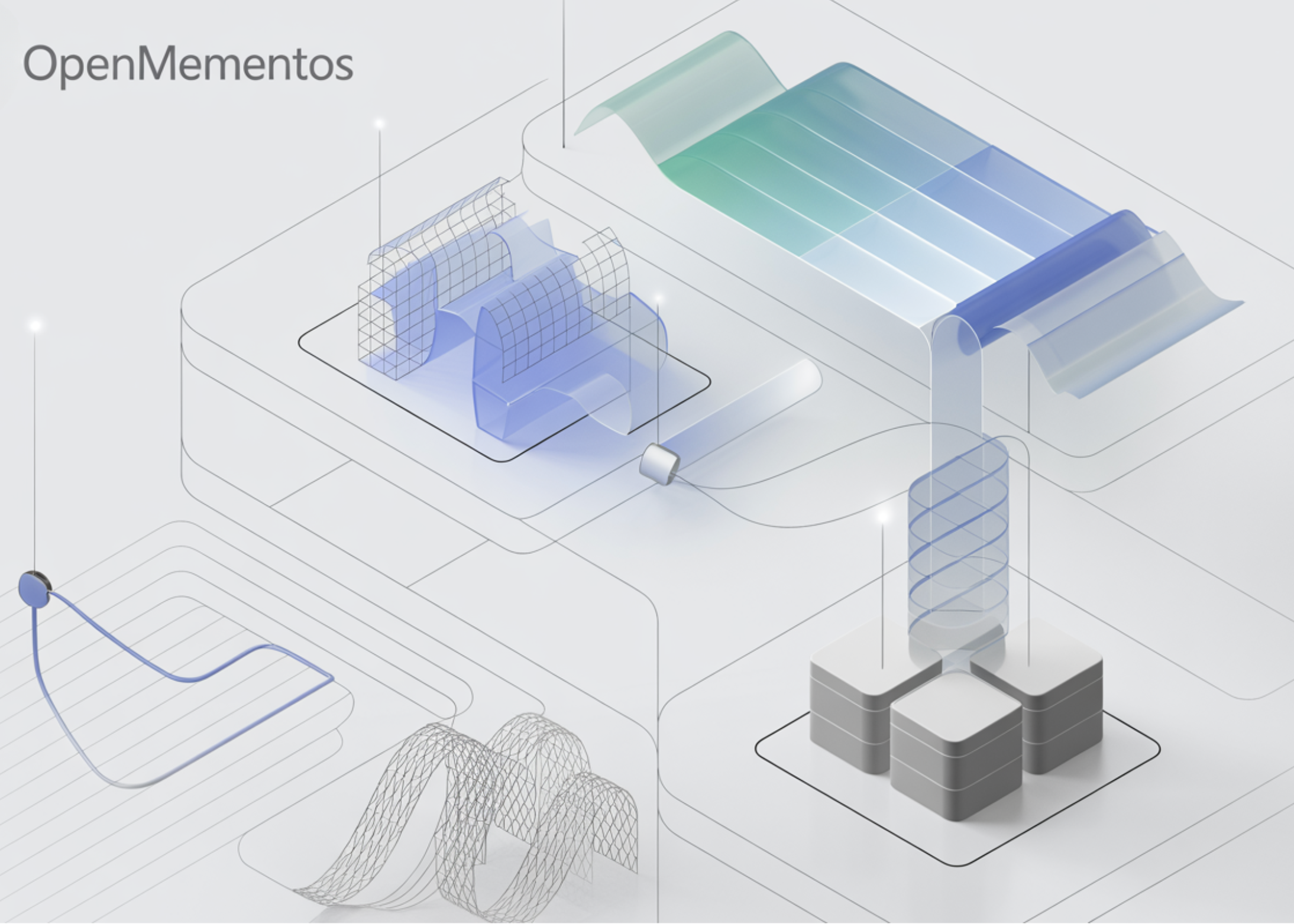

In this lesson, we work Microsoft’s OpenMementos dataset and explore how thought tracking is built with blocks and mementos in an efficient, Colab-ready workflow. We dissect the dataset, analyze its special token format, examine how logic and abstractions are organized, and measure the compression provided by the memento representation across different domains. As we continue to analyze, we also visualize patterns in the data set, match the distributed format to the rich full set, simulate the compression of the projection time, and prepare the data for supervised optimization. In this way, we build both intuitive and technical understanding of how OpenMementos captures long-form thinking while preserving compact summaries that can support practical training and explanation.

!pip install -q -U datasets transformers matplotlib pandas

import re, itertools, textwrap

from collections import Counter

from typing import Dict

import pandas as pd

import matplotlib.pyplot as plt

from datasets import load_dataset

DATASET = "microsoft/OpenMementos"

ds_stream = load_dataset(DATASET, split="train", streaming=True)

first_row = next(iter(ds_stream))

print("Columns :", list(first_row.keys()))

print("Domain :", first_row["domain"], "| Source:", first_row["source"])

print("Problem head:", first_row["problem"][:160].replace("n", " "), "...")We include the necessary libraries and import the necessary tools for data set streaming, analysis, analysis, and visualization. We then connect to the Microsoft OpenMementos dataset in streaming mode to test it without downloading the entire dataset locally. By studying the first example, we begin to understand the schema of the data set, the format of the problem, and the background and source metadata attached to each logic trace.

BLOCK_RE = re.compile(r"<|block_start|>(.*?)<|block_end|>", re.DOTALL)

SUMMARY_RE = re.compile(r"<|summary_start|>(.*?)<|summary_end|>", re.DOTALL)

THINK_RE = re.compile(r"<think>(.*?)</think>", re.DOTALL)

def parse_memento(response: str) -> Dict:

blocks = [m.strip() for m in BLOCK_RE.findall(response)]

summaries = [m.strip() for m in SUMMARY_RE.findall(response)]

think_m = THINK_RE.search(response)

final_ans = response.split("</think>")[-1].strip() if "</think>" in response else ""

return {"blocks": blocks, "summaries": summaries,

"reasoning": (think_m.group(1) if think_m else ""),

"final_answer": final_ans}

parsed = parse_memento(first_row["response"])

print(f"n→ {len(parsed['blocks'])} blocks, {len(parsed['summaries'])} mementos parsed")

print("First block :", parsed["blocks"][0][:140].replace("n", " "), "...")

print("First memento :", parsed["summaries"][0][:140].replace("n", " "), "...")

N_SAMPLES = 500

rows = []

for i, ex in enumerate(itertools.islice(

load_dataset(DATASET, split="train", streaming=True), N_SAMPLES)):

p = parse_memento(ex["response"])

if not p["blocks"] or len(p["blocks"]) != len(p["summaries"]):

continue

blk_c = sum(len(b) for b in p["blocks"])

sum_c = sum(len(s) for s in p["summaries"])

blk_w = sum(len(b.split()) for b in p["blocks"])

sum_w = sum(len(s.split()) for s in p["summaries"])

rows.append(dict(domain=ex["domain"], source=ex["source"],

n_blocks=len(p["blocks"]),

block_chars=blk_c, summ_chars=sum_c,

block_words=blk_w, summ_words=sum_w,

compress_char=sum_c / max(blk_c, 1),

compress_word=sum_w / max(blk_w, 1)))

if (i + 1) % 100 == 0:

print(f" processed {i+1}/{N_SAMPLES}")

df = pd.DataFrame(rows)

print(f"nAnalyzed {len(df)} rows. Domain counts:")

print(df["domain"].value_counts().to_string())

per_dom = df.groupby("domain").agg(

n=("domain", "count"),

median_blocks=("n_blocks", "median"),

median_block_words=("block_words", "median"),

median_summ_words=("summ_words", "median"),

median_char_ratio=("compress_char", "median"),

median_word_ratio=("compress_word", "median"),

).round(3)

print("nPer-domain medians (ratio = mementos / blocks):")

print(per_dom.to_string())We describe a regex-based parser that extracts thought blocks, memento summaries, the main thought section, and the final response from each response. We test the attacker on the first broadcast example and make sure that the structure of the block summary is captured correctly. We then run streaming analysis on multiple samples to calculate block counts, word counts, character counts, and compression ratios, which help us learn how the dataset behaves across samples and domains.

def compress_trace(response: str, keep_last_k: int = 1) -> str:

blocks, summaries = BLOCK_RE.findall(response), SUMMARY_RE.findall(response)

if not blocks or len(blocks) != len(summaries):

return response

out, n = ["<think>"], len(blocks)

for i, (b, s) in enumerate(zip(blocks, summaries)):

if i >= n - keep_last_k:

out.append(f"<|block_start|>{b}<|block_end|>")

out.append(f"<|summary_start|>{s}<|summary_end|>")

else:

out.append(f"<|summary_start|>{s}<|summary_end|>")

out.append("</think>")

out.append(response.split("</think>")[-1])

return "n".join(out)

orig, comp = first_row["response"], compress_trace(first_row["response"], 1)

print(f"nOriginal : {len(orig):>8,} chars")

print(f"Compressed : {len(comp):>8,} chars ({len(comp)/len(orig)*100:.1f}% of original)")

from transformers import AutoTokenizer

tok = AutoTokenizer.from_pretrained("gpt2")

MEM_TOKENS = ["<|block_start|>", "<|block_end|>",

"<|summary_start|>", "<|summary_end|>",

"<think>", "</think>"]

tok.add_special_tokens({"additional_special_tokens": MEM_TOKENS})

def tlen(s): return len(tok(s, add_special_tokens=False).input_ids)

blk_tok = sum(tlen(b) for b in parsed["blocks"])

sum_tok = sum(tlen(s) for s in parsed["summaries"])

print(f"nTrace-level token compression for this example:")

print(f" block tokens = {blk_tok}")

print(f" memento tokens = {sum_tok}")

print(f" compression = {blk_tok / max(sum_tok,1):.2f}× (paper reports ~6×)")

def to_chat(ex):

return {"messages": [

{"role": "user", "content": ex["problem"]},

{"role": "assistant", "content": ex["response"]},

]}

chat_stream = load_dataset(DATASET, split="train", streaming=True).map(to_chat)

chat_ex = next(iter(chat_stream))

print("nSFT chat example (truncated):")

for m in chat_ex["messages"]:

print(f" [{m['role']:9s}] {m['content'][:130].replace(chr(10),' ')}...")We visualize the structural patterns of the dataset by plotting block counts, compression ratios, and the relationship between block size and memento size. We compare these distributions across domains to see how the organization of thought differs between examples of math, coding, and science. We also broadcast one example from the full subset and examine its additional sentence-level fields and block alignment, which helps us understand the rich internal annotation pipeline behind the dataset.

def compress_trace(response: str, keep_last_k: int = 1) -> str:

blocks, summaries = BLOCK_RE.findall(response), SUMMARY_RE.findall(response)

if not blocks or len(blocks) != len(summaries):

return response

out, n = ["<think>"], len(blocks)

for i, (b, s) in enumerate(zip(blocks, summaries)):

if i >= n - keep_last_k:

out.append(f"<|block_start|>{b}<|block_end|>")

out.append(f"<|summary_start|>{s}<|summary_end|>")

else:

out.append(f"<|summary_start|>{s}<|summary_end|>")

out.append("</think>")

out.append(response.split("</think>")[-1])

return "n".join(out)

orig, comp = first_row["response"], compress_trace(first_row["response"], 1)

print(f"nOriginal : {len(orig):>8,} chars")

print(f"Compressed : {len(comp):>8,} chars ({len(comp)/len(orig)*100:.1f}% of original)")

from transformers import AutoTokenizer

tok = AutoTokenizer.from_pretrained("gpt2")

MEM_TOKENS = ["<|block_start|>", "<|block_end|>",

"<|summary_start|>", "<|summary_end|>",

"<think>", "</think>"]

tok.add_special_tokens({"additional_special_tokens": MEM_TOKENS})

def tlen(s): return len(tok(s, add_special_tokens=False).input_ids)

blk_tok = sum(tlen(b) for b in parsed["blocks"])

sum_tok = sum(tlen(s) for s in parsed["summaries"])

print(f"nTrace-level token compression for this example:")

print(f" block tokens = {blk_tok}")

print(f" memento tokens = {sum_tok}")

print(f" compression = {blk_tok / max(sum_tok,1):.2f}× (paper reports ~6×)")

def to_chat(ex):

return {"messages": [

{"role": "user", "content": ex["problem"]},

{"role": "assistant", "content": ex["response"]},

]}

chat_stream = load_dataset(DATASET, split="train", streaming=True).map(to_chat)

chat_ex = next(iter(chat_stream))

print("nSFT chat example (truncated):")

for m in chat_ex["messages"]:

print(f" [{m['role']:9s}] {m['content'][:130].replace(chr(10),' ')}...")We simulate inference time compression by rewriting the inference sequence so that older blocks are replaced by their mementos while later blocks remain intact. We then compare the original and compressed trace lengths to see how much context can be reduced in performance. After that, we tokenize, add special memento tokens, measure token-level compression, and convert the dataset into an SFT-style dialog format suitable for training workflows.

def render_trace(response: str, width: int = 220) -> None:

p = parse_memento(response)

print("=" * 72)

print(f"{len(p['blocks'])} blocks · {len(p['summaries'])} mementos")

print("=" * 72)

for i, (b, s) in enumerate(zip(p["blocks"], p["summaries"]), 1):

ratio = len(s) / max(len(b), 1) * 100

print(f"n BLOCK {i} ({len(b):,} chars)")

print(textwrap.indent(textwrap.shorten(b.replace("n", " "), width=width), " "))

print(f" I-MEMENTO {i} ({len(s):,} izinhlamvu · {isilinganiso:.1f}% sebhulokhi)") phrinta(textwrap.indent(textwrap.shorten(s.replace("n), " "), wide=width), " ")) uma p["final_answer"]: phrinta("n★ IMPENDULO YOKUGCINA") phrinta(textwrap.indent(textwrap.shorten(p)["final_answer"].buyisela("n", "), ububanzi=ububanzi*2), " ")) nikeza_ukulandelela(umugqa_wokuqala["response"])

I-MEMENTO {i} ({len(s):,} izinhlamvu · {isilinganiso:.1f}% sebhulokhi)") phrinta(textwrap.indent(textwrap.shorten(s.replace("n), " "), wide=width), " ")) uma p["final_answer"]: phrinta("n★ IMPENDULO YOKUGCINA") phrinta(textwrap.indent(textwrap.shorten(p)["final_answer"].buyisela("n", "), ububanzi=ububanzi*2), " ")) nikeza_ukulandelela(umugqa_wokuqala["response"])We’ve created a great printer that provides a single clue of thinking in a very readable block-by-block format. We display each block next to its paired memento and calculate the size of the snapshot relative to the original block, making the compression effect easy to check manually. Using this renderer in the first example, we create a clean qualitative view of how OpenMementos organizes thinking and preserves important information by using snapshots.

In conclusion, we got a clear idea of how OpenMementos represents thinking as a series of detailed blocks paired with short mementos, and we saw why this structure is useful for context compression. We analyzed real examples, performed domain-level calculations, compared block and hash lengths, and saw how compressed tracking can reduce token consumption while preserving valuable information. We also aligned the format of the distributed dataset with a full subset, converted the data to an SFT-friendly dialog structure, and developed tools to more precisely evaluate the trace. With this end-to-end workflow, we understand the data set itself and see how it can serve as an effective basis for studying memory traces, memory style summaries, and the effective behavior of a long-term context model.

Check it out Full Codes here. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.?Connect with us

The post Code Implementation in Microsoft’s OpenMementos with Trace Structure Analysis, Content Compression, and Fine-Tuning Data Optimization appeared first on MarkTechPost.